近日,美国俄勒冈健康与科学大学Stephen V. David及其课题组的研究显示,卷积神经网络模型描述了听觉皮层局部电路的编码子空间。相关论文于2026年2月23日发表在《自然—神经科学》杂志上。

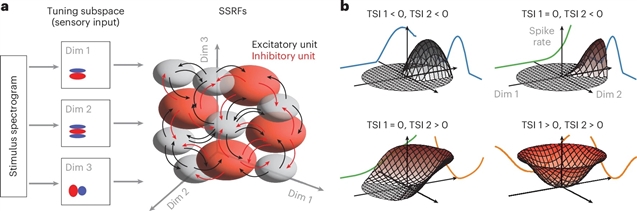

为了解决这一限制,课题组研究人员开发了一个线性-非线性子空间模型,用于识别CNN捕获的信息量最大的感官维度。在呈现大型自然声音集的过程中,用雪貂听觉皮层记录的单个神经元数据训练CNN。通过对CNN输出相对于输入的梯度进行降维,计算每个神经元的线性调谐子空间。子空间投影非线性结合预测神经活动。得到的模型在功能上等同于CNN。对训练模型的分析表明,局部神经群体的反应稀疏地分布在一个共享的刺激子空间中。编码特性在细胞类型和层之间也有所不同,反映了它们在皮层回路中的位置。更一般地说,这些结果为解释基于深度学习的编码模型建立了一个框架。

研究人员表示,卷积神经网络(CNNs)提供了强大的神经感觉编码模型,但其复杂性使得难以识别支持其性能的计算。

附:英文原文

Title: Convolutional neural network models describe the encoding subspace of local circuits in auditory cortex

Author: Wingert, Jereme C., Parida, Satyabrata, Norman-Haignere, Sam V., David, Stephen V.

Issue&Volume: 2026-02-23

Abstract: Convolutional neural networks (CNNs) provide powerful models of neural sensory encoding, but their complexity makes it difficult to discern computations that support their performance. Here, to address this limitation, we developed a linear–nonlinear subspace model that identifies the most informative sensory dimensions captured by a CNN. A CNN was trained on single-neuron data recorded from auditory cortex of ferrets during presentation of a large natural sound set. Each neuron’s linear tuning subspace was computed by applying dimensionality reduction to the gradient of CNN output relative to input. Subspace projections were combined nonlinearly to predict neural activity. The resulting model was functionally equivalent to the CNN. Analysis of trained models showed that responses of local neural populations sparsely tiled a shared stimulus subspace. Encoding properties also differed between cell types and layers, reflecting their position in the cortical circuit. More generally, these results establish a framework for interpreting deep-learning-based encoding models.

DOI: 10.1038/s41593-026-02216-0

Source: https://www.nature.com/articles/s41593-026-02216-0

Nature Neuroscience:《自然—神经科学》,创刊于1998年。隶属于施普林格·自然出版集团,最新IF:28.771

官方网址:https://www.nature.com/neuro/

投稿链接:https://mts-nn.nature.com/cgi-bin/main.plex