近日,美国加州大学洛杉矶分校Aydogan Ozcan团队研究了光学生成模型。相关论文于2025年8月27日发表在《自然》杂志上。

生成模型涵盖了各种应用领域,包括图像和视频合成,自然语言处理和分子设计,以及许多其他领域。随着数字生成模型变得越来越大,以快速和节能的方式进行可扩展推理成为一项挑战。

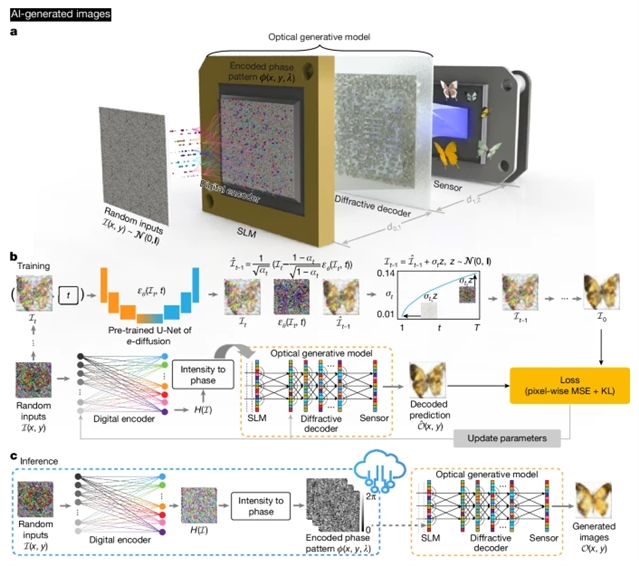

研究组提出了受扩散模型启发的光学生成模型,其中一个浅而快速的数字编码器首先将随机噪声映射到相位模式中,作为所需数据分布的光学生成种子;一个联合训练的基于自由空间的可重构解码器全光处理这些生成种子,以创建目标数据分布之前从未见过的图像。除了照明功率和通过浅编码器生成随机种子外,这些光学生成模型在图像合成过程中不消耗计算能力。

研究组报告了手写数字、时尚产品、蝴蝶、人脸和艺术品的单色和多色图像的光学生成,分别遵循MNIST、Fashion-MNIST、Butterflies-100、Celeb-A数据集和梵高的绘画和素描的数据分布,实现了与基于数字神经网络的生成模型相当的整体性能。为了实验证明光学生成模型,研究组以可见光为主题来生成手写数字和时尚产品的图像。此外,他们还创作了以单色和多波长照明为主题的梵高风格的艺术品。这些光学生成模型可能为节能和可扩展的推理任务铺平道路,进一步开发光学和光子学在人工智能生成内容方面的潜力。

附:英文原文

Title: Optical generative models

Author: Chen, Shiqi, Li, Yuhang, Wang, Yuntian, Chen, Hanlong, Ozcan, Aydogan

Issue&Volume: 2025-08-27

Abstract: Generative models cover various application areas, including image and video synthesis, natural language processing and molecular design, among many others1,2,3,4,5,6,7,8,9,10,11. As digital generative models become larger, scalable inference in a fast and energy-efficient manner becomes a challenge12,13,14. Here we present optical generative models inspired by diffusion models4, where a shallow and fast digital encoder first maps random noise into phase patterns that serve as optical generative seeds for a desired data distribution; a jointly trained free-space-based reconfigurable decoder all-optically processes these generative seeds to create images never seen before following the target data distribution. Except for the illumination power and the random seed generation through a shallow encoder, these optical generative models do not consume computing power during the synthesis of the images. We report the optical generation of monochrome and multicolour images of handwritten digits, fashion products, butterflies, human faces and artworks, following the data distributions of MNIST15, Fashion-MNIST16, Butterflies-10017, Celeb-A datasets18, and Van Gogh’s paintings and drawings19, respectively, achieving an overall performance comparable to digital neural-network-based generative models. To experimentally demonstrate optical generative models, we used visible light to generate images of handwritten digits and fashion products. In addition, we generated Van Gogh-style artworks using both monochrome and multiwavelength illumination. These optical generative models might pave the way for energy-efficient and scalable inference tasks, further exploiting the potentials of optics and photonics for artificial-intelligence-generated content.

DOI: 10.1038/s41586-025-09446-5

Source: https://www.nature.com/articles/s41586-025-09446-5

Nature:《自然》,创刊于1869年。隶属于施普林格·自然出版集团,最新IF:69.504

官方网址:http://www.nature.com/

投稿链接:http://www.nature.com/authors/submit_manuscript.html